How to Run an AI Search Visibility Audit: The 2026 Playbook for Content Teams

- An AI search visibility audit measures whether your brand is cited, summarized, or excluded across answer engines

- Ranking reports alone miss the new answer layer where many discovery journeys now begin and end

- The best audits combine prompt testing, citation tracking, content gap analysis, and technical crawl review

- At Cogni, we treat AI visibility as an operational metric, not a vague brand-awareness concept

Most content teams are still measuring the old internet.

They track rankings, sessions, backlinks, click-through rate, and maybe assisted conversions. Those metrics still matter. They are also no longer enough to explain why one brand keeps showing up in AI answers while another with comparable domain authority disappears.

The missing layer is answer visibility.

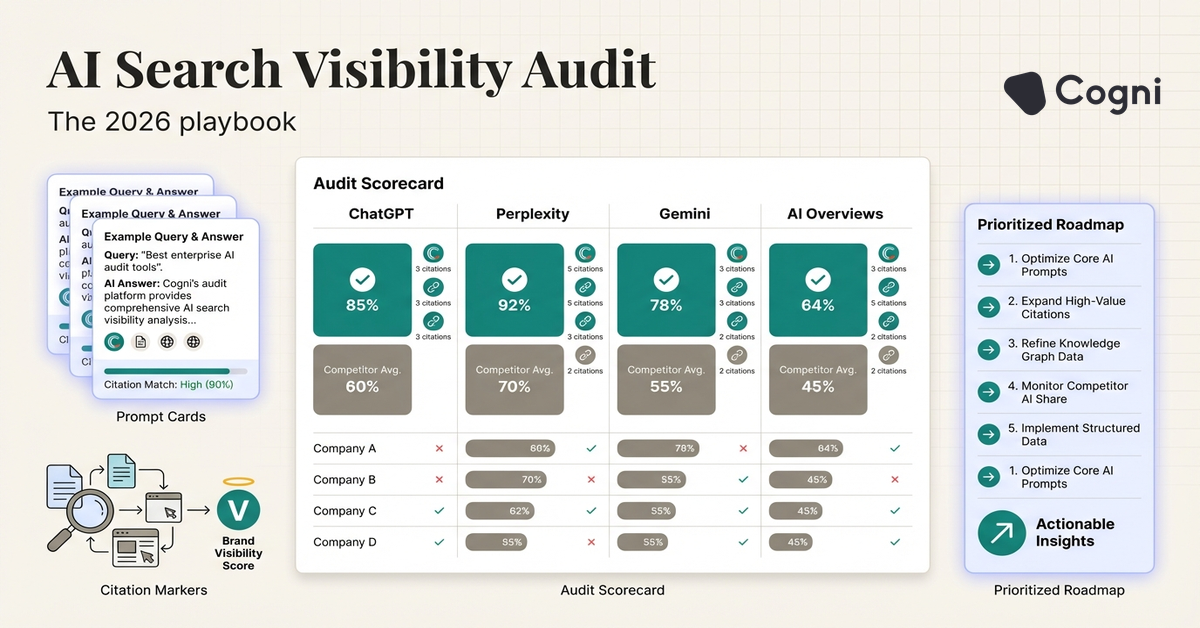

An AI search visibility audit is a structured review of how often, where, and why your brand appears in AI-generated answers across systems like ChatGPT, Perplexity, Gemini, and Google AI Overviews. It is part content audit, part retrieval audit, and part brand discoverability analysis.

This is becoming a board-level issue faster than many teams realize. According to Gartner in 2024, traditional search engine volume is projected to decline 25% by 2026. According to Adobe’s survey of U.S. consumers in 2025, AI search usage for product research and recommendations continued rising sharply, especially among younger high-intent users. According to the KDD 2024 Generative Engine Optimization paper, specific content changes such as adding citations, statistics, and quotation-style phrasing can materially improve visibility in generative search systems.

Once the answer layer starts taking demand before the click, you need a way to measure it.

Why a normal SEO audit is no longer enough

A traditional SEO audit asks whether your content can rank. An AI visibility audit asks whether your content can be retrieved, trusted, and cited inside a generated answer.

Those are related questions, but not identical.

A page can rank well and still fail in AI search because:

- the answer is buried below unnecessary intro copy

- key claims lack named evidence

- the site has multiple overlapping pages for the same concept

- the page is crawlable but not easy to synthesize

- the brand lacks enough topic-level authority to be selected

I have seen teams assume that top-three rankings mean they are safe. Then they test the same topics in Perplexity and Google AI Overviews and discover that a smaller, more structured competitor owns the answer layer.

The five questions every AI visibility audit should answer

A useful audit should answer five things clearly.

1. Where does the brand appear today?

You need a baseline across platforms. Test your target prompts in ChatGPT search, Perplexity, Gemini, Google AI Overviews, and any relevant vertical assistants. Log whether your brand is cited, summarized without citation, mentioned as an example, or absent.

2. Which prompts matter commercially?

Not every prompt deserves equal effort. Group prompts into three buckets:

- category education

- comparison and evaluation

- action or implementation

The second and third buckets usually matter most for pipeline.

3. Which content assets are helping or hurting?

Identify the pages most often associated with positive appearances. Then identify weak pages that create ambiguity. Thin glossary pages, duplicate blog posts, and dated statistics often reduce confidence at retrieval time.

4. What technical barriers are reducing visibility?

AI search audits should include crawl checks, rendering checks, schema checks, and site architecture review. If the best answer page is blocked, slow, or poorly linked, it is easier for another brand to become the cited source.

5. What is the fastest path to improvement?

The audit should end with a prioritized roadmap, not a pile of observations.

The audit framework we use at Cogni

At Cogni, I prefer a four-layer model that connects prompt performance to content operations.

| Audit layer | What you measure | Typical findings |

|---|---|---|

| Prompt layer | Brand presence, citation frequency, prompt-specific outcomes | Strong presence on branded prompts, weak presence on category prompts |

| Content layer | Answer quality, structure, freshness, evidence density | Pages rank but do not provide extraction-friendly answer blocks |

| Authority layer | Topic ownership, external references, branded entity clarity | Brand known for product, not known for concept |

| Technical layer | Crawl access, schema, speed, canonicalization, internal linking | Good content hidden behind weak architecture |

That model keeps the audit from collapsing into either a ranking report or a qualitative brand memo. It stays operational.

Step 1: Build the right prompt set

Most failed audits start with the wrong prompts. Teams test whatever sounds interesting instead of what reveals market position.

Build a prompt set of 30 to 50 queries across these groups:

- “What is” questions tied to your category

- “How to” questions tied to your workflow

- “Best tools” and comparison prompts

- strategic prompts a buyer might ask before vendor shortlisting

- adjacent educational prompts where authority matters before intent is explicit

Include both generic and high-intent phrasing. For example, not just “what is generative engine optimization” but also “how do I improve brand visibility in ChatGPT” and “which tools help track AI citations.”

If you are in the early stages of content design, our guide on how to structure content for Google AI Overviews is a useful companion because it translates prompt intent into page structure. For broader tactical foundations, review generative engine optimization.

Step 2: Create a scoring model

Qualitative notes are not enough. Give each prompt a simple score.

A practical model might look like this:

- 3 points: brand cited prominently

- 2 points: brand mentioned but not primary source

- 1 point: brand implied or summarized without explicit mention

- 0 points: brand absent

Then add modifiers for:

- source quality of the cited page

- prompt commercial value

- presence of competitor brands

- answer accuracy when your brand is mentioned

This gives you a visibility score that can be tracked over time instead of a one-off screenshot archive.

Teams that turn prompt testing into a repeatable scorecard improve faster than teams that treat AI search like anecdotal brand monitoring. What gets measured at prompt level gets fixed at content level.

Step 3: Audit the pages behind the answers

Once you know which prompts matter and how your brand performs, move down one level into the content.

For every important prompt, ask:

- which page should be winning this question?

- does that page contain a direct definition or answer block?

- are the strongest claims backed by named sources?

- is the page updated with fresh numbers and examples?

- does the structure make extraction easy?

You are looking for mismatch between intent and page design.

In many audits, the problem is not absence. The problem is misalignment. The right page exists, but it was written for rankings or thought leadership rather than retrievability.

Step 4: Review entity and authority signals

AI systems do not only retrieve pages. They also infer which brands are associated with which concepts.

That means your audit should examine whether your brand is consistently tied to the topics you want to own. Signals include:

- repeated co-occurrence of brand and category terms

- bylined thought leadership on owned and external properties

- citations from third-party sources

- clean organization schema and author identity

- consistency in how your company is described across the site

This is where many B2B brands lose visibility. They have product pages, but not concept ownership. Their site explains what they sell, but not the market problem well enough to become a cited authority.

Step 5: Check technical retrieval readiness

Technical readiness still matters. I would review:

- robots rules affecting AI crawlers and search crawlers

- canonical conflicts across similar pages

- schema coverage for article, FAQ, and organization markup

- Core Web Vitals on answer-focused pages

- internal links pointing toward canonical guides

- duplicate content that fractures answer equity

Some brands sabotage themselves by publishing multiple semi-overlapping guides for the same question. In classic SEO, that creates cannibalization. In AI search, it can also create uncertainty about which page contains the cleanest answer.

What the output of the audit should look like

A good audit ends with a roadmap in three horizons.

Horizon 1: Quick fixes, 2 to 4 weeks

- rewrite direct definitions on key pages

- add FAQ sections to top informational assets

- improve internal links to canonical guides

- update stale statistics

Horizon 2: Content rebuilds, 1 to 2 months

- merge or consolidate overlapping pages

- rebuild thin posts into structured category assets

- create comparison pages for evaluation-stage prompts

- add author context and evidence to weak pages

Horizon 3: Authority building, ongoing

- publish original research

- build topic clusters intentionally

- expand third-party mentions and citations

- track prompt-level performance monthly

That is what turns an audit into an operating system.

Frequently Asked Questions

What is an AI search visibility audit?

It is a structured review of how your brand appears across answer engines like ChatGPT, Perplexity, Gemini, and Google AI Overviews. The goal is to measure citation frequency, answer presence, and the content or technical factors affecting visibility.

How is an AI visibility audit different from an SEO audit?

An SEO audit focuses on rankings, crawlability, and organic performance in traditional search results. An AI visibility audit adds prompt testing, citation analysis, answer extractability, and brand presence inside generated responses.

How often should teams run an AI search audit?

Monthly is a good baseline for fast-moving categories, especially if your team is actively publishing or updating key pages. Quarterly can work for slower-moving spaces, but anything less frequent usually misses important shifts.

What metrics should be included in an AI search visibility audit?

Track prompt-level presence, citation frequency, source page quality, competitor share, and content readiness. It also helps to score pages for definition clarity, evidence density, and FAQ coverage.

What is the first thing to fix after an audit?

Start with the highest-value prompts where your brand is absent or weakly represented. Then improve the page most likely to win that question by making the answer clearer, better structured, and better supported.

Conclusion

The teams that win AI search will not be the teams with the prettiest dashboards. They will be the teams with the clearest feedback loop between prompt performance and content operations.

Rankings still matter. But if your brand is invisible inside the answer layer, you are under-measuring the part of search that is changing fastest.

What you cannot see in AI search, you cannot improve. If you want a tighter system for measuring and improving answer visibility, Cogni is built for exactly that job.