What Content Format Wins the Most AI Citations?

Most content teams still think in formats built for clicks.

Listicles. Long-form guides. Opinion pieces. Glossaries. Original research. Product pages. Comparison pages.

All of those formats can work in traditional search. In AI search, they do not perform equally.

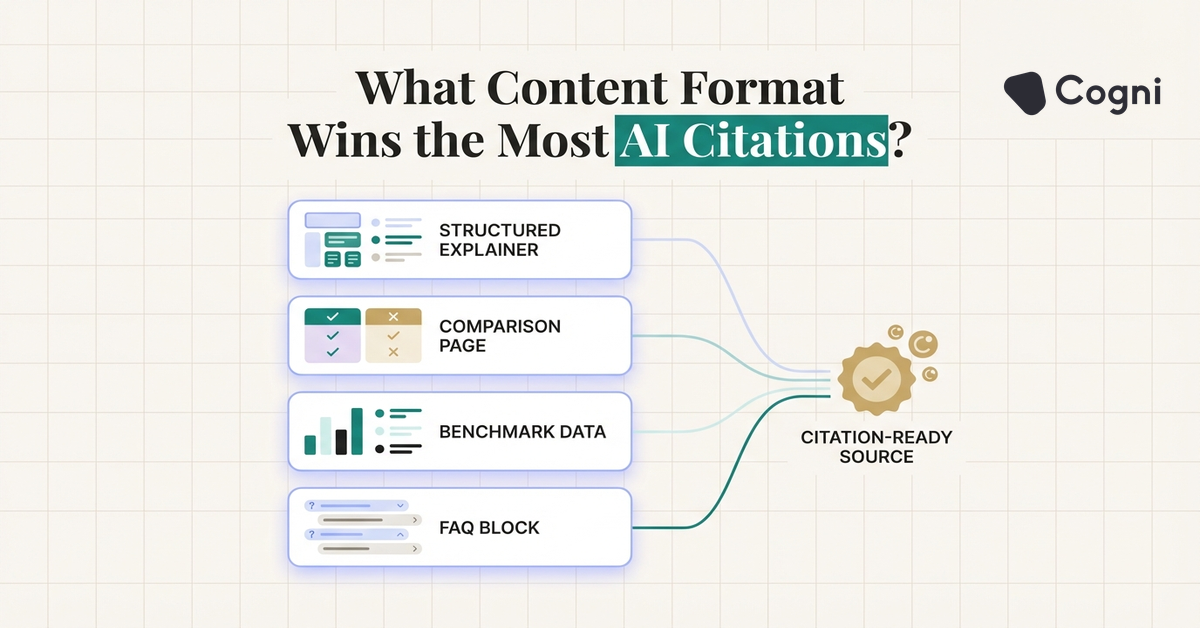

The best content format for AI citations is usually not a format category at all. It is a content shape: a page that answers a narrow question clearly, backs claims with evidence, and makes extraction easy.

That said, some page types do outperform others more consistently when answer engines decide what to cite.

This article breaks down which formats win most often, why they win, and how content teams should redesign their editorial mix if citation visibility is now a KPI.

- Definition pages, structured explainers, and comparison pages usually outperform broad thought leadership for AI citations

- Original research is powerful, but only when the findings are easy to extract and clearly attributed

- Format matters less than answer clarity, evidence density, and topical focus

- The highest-performing content libraries balance category pages, workflow guides, and citable data assets

Why citation-friendly content looks different

Answer engines are not choosing pages the same way a human browsing session does.

A human might click a provocative headline, skim a few sections, and stay for the writing style. An AI system has a simpler job. It needs material that can be retrieved, trusted, compressed, and inserted into an answer with minimal distortion.

That changes what wins.

Pages with broad framing and slow narrative buildup can still be useful. They are just less likely to become the quoted or cited core of a response than a page that answers the question directly.

The formats that usually perform best

Here is the pattern I see most often.

1. Structured explainers

These are the strongest all-around format for AI citation visibility.

A structured explainer usually includes:

- a direct answer near the top

- scannable headings

- concise paragraphs

- examples or edge cases

- supporting evidence with named sources

This format works because it aligns with how answer engines summarize. The system can identify a definition, pull supporting context, and preserve enough fidelity to trust the result.

Our own guide to answer engine optimization follows this pattern because it is designed to answer, not just rank.

2. Comparison pages

Comparison pages often perform well for commercial prompts because they help with evaluation.

Examples include:

- tool A vs tool B

- GEO vs SEO

- AI search optimization platform comparison

- build vs buy framework pages

These pages are especially strong when the structure is explicit. Tables, side-by-side criteria, tradeoffs, and scenario-based recommendations all make the page easy to cite.

3. Definition-led category pages

If you want to own an emerging term, you need a page built to define it.

That means:

- the term appears in the H1

- the first paragraph defines it plainly

- related concepts are distinguished clearly

- the page includes examples, use cases, and FAQs

This is one reason glossary-style pages can work, but only if they go beyond thin dictionary content. The best category pages feel like mini field guides, not placeholder SEO assets.

4. Original research and benchmark roundups

These can become citation magnets, but only when the findings are usable.

A giant report hidden inside a PDF is often less citable than a simple article that presents one benchmark clearly and attributes it well.

If you publish original data, make sure:

- the methodology is visible

- the numbers are broken into digestible findings

- charts have textual interpretation

- the source and date are obvious

The citation value comes from extractable evidence, not the fact that the report exists.

Formats that often underperform

Not every content type translates well into AI search.

Broad thought leadership

These articles can build brand voice and authority, but they are not always citation-friendly. If the core point is buried inside narrative or opinion, the engine has little clean material to lift.

Trend roundups without original structure

If the page mostly aggregates obvious news without adding a framework, it is easy for the model to skip.

Thin glossary pages

A 250-word definition page with no evidence, no examples, and no differentiation rarely earns durable answer-layer visibility.

Vague product pages

These are especially weak for AI search. If the page says a platform helps teams "unlock better outcomes" without specifying jobs, workflows, or categories, it gives the engine very little to work with.

What high-performing pages have in common

The common denominator is not topic alone. It is structure.

| Trait | Why it helps citations |

|---|---|

| Direct answer in opening section | Reduces ambiguity and increases extraction accuracy |

| Clear headings and subheadings | Helps retrieval systems isolate relevant sections quickly |

| Named evidence and dated sources | Improves trust when the answer needs support |

| Examples and tradeoffs | Makes the page usable for recommendation and advisory prompts |

| Focused scope | A tighter page is easier to cite than a sprawling everything-post |

The ideal editorial mix for citation growth

If your team wants more AI citations, do not bet everything on one format. Build a portfolio.

I would recommend this mix:

40% category and explainer pages

These are your foundation. They help with educational discovery and concept ownership.

30% commercial comparison and workflow pages

These help with evaluation-stage prompts and recommendation queries.

20% benchmark and research assets

These give you citable evidence and original data points.

10% opinion and narrative thought leadership

Keep these for brand voice and market perspective, but do not expect them to carry the whole citation strategy.

How to turn an existing article into a more citable format

Most teams do not need to rewrite the whole library from scratch.

Start with underperforming pages and make these upgrades:

- add a direct definition in the first section

- break long paragraphs into tighter blocks

- insert named data points and sources

- add an FAQ section with exact-match questions

- clarify comparisons and use cases

- reduce abstract language

For example, if a page discusses AI search generally, convert it into a structured explainer with sections on definition, examples, differences from SEO, and implementation steps.

The pages that earn the most citations are usually not the longest or the most opinionated. They are the easiest to trust, segment, and quote.

Frequently Asked Questions

What is the best content format for AI citations?

Structured explainers are usually the strongest format because they combine direct answers, clear headings, and evidence in a way answer engines can easily retrieve and summarize.

Do listicles work for AI search?

Sometimes, but only when they provide specific, evidence-backed value. Generic listicles with weak criteria are less likely to be cited than comparison pages or structured guides.

Is original research good for AI visibility?

Yes, especially when the findings are clearly attributed and easy to extract. Hidden data is not very citable. Visible findings are.

Are product pages useful for AI citations?

They can be, especially for recommendation and comparison prompts. But they need clear positioning, specific use cases, and explicit workflow details.

Final thought

If your editorial calendar is still built around clickbait-era format logic, AI search will expose the weakness quickly.

The winning format is not "blog post" or "report" in the abstract. It is any page designed to answer one important question clearly enough that an answer engine trusts it with minimal cleanup.

That is the standard content teams should build for now.