Do You Need llms.txt for AI Search? What Actually Matters in 2026

- llms.txt can help AI agents discover your preferred content map, but it does not guarantee citations or rankings

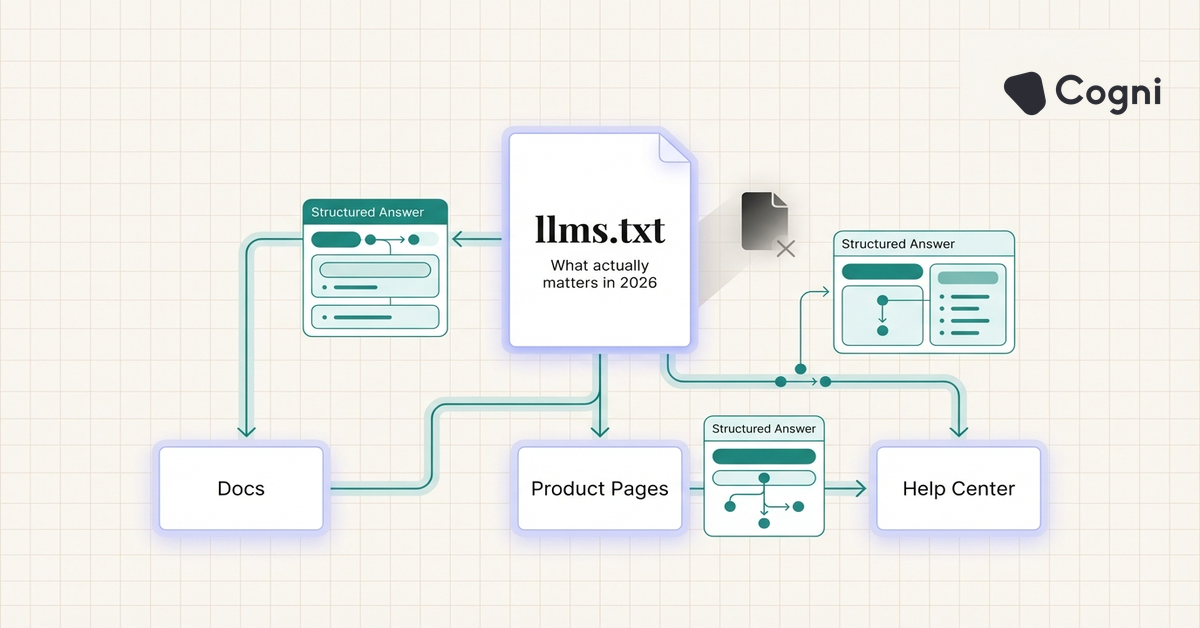

- Fast crawlable pages, structured answers, and strong internal linking still matter more than a standalone llms.txt file

- Many teams are treating llms.txt like schema in 2015, interesting, promising, but not a substitute for content quality

- At Cogni, we have seen AI-visible pages improve faster when llms.txt is paired with FAQ structure and clean crawl paths

Most teams talking about AI search infrastructure are currently overestimating one file.

llms.txt has become the newest checkbox in technical SEO circles. The pitch is simple: publish a machine-readable file that helps large language model agents understand your site, and your content becomes easier to use in AI answers. That framing is directionally right, but operationally incomplete. A file does not create authority. It does not fix weak content. It does not make a messy site retrievable.

llms.txt is a proposed site-level instruction file that gives AI agents a cleaner map of your most important content, preferred summaries, and documentation paths. It is best understood as a discovery aid, not a visibility hack. In practice, it can reduce ambiguity for retrieval systems, but only when the underlying content is already worth extracting.

The timing explains the hype. According to Gartner in 2024, traditional search engine volume is projected to decline 25% by 2026 as AI-native search behavior grows. According to Similarweb in 2025, ChatGPT remained among the fastest-growing referral sources to publisher sites, while Perplexity continued gaining share in high-intent research workflows. As more discovery moves into answer interfaces, technical teams are looking for infrastructure patterns that make content easier for AI systems to parse.

The mistake is assuming llms.txt is that pattern by itself.

What llms.txt actually does

llms.txt is meant to provide a compact, explicit guide for AI agents visiting your domain. A good implementation points to the pages you most want interpreted correctly, explains what the site covers, and sometimes provides summaries of key sections or documents.

In plain language, it answers three questions for a machine:

- What is this site about?

- Which pages matter most?

- Where should an agent look for canonical information?

That is useful. It is also limited.

AI systems that cite live web results still depend on page quality, crawlability, freshness, and extractable structure. Anthropic and OpenAI both document web retrieval patterns that rely on accessible pages and useful page-level content, not just site-level hints. Google’s Search Central guidance also keeps returning to the same principle: make your content crawlable, useful, and clearly structured.

At Cogni, I have observed that teams get more value from llms.txt when they already have a disciplined content architecture. If your site has ten overlapping pages targeting the same concept, llms.txt cannot resolve that confusion on its own. If your best answers are buried in product pages with weak headings, the file will not magically create snippet-ready copy.

Why the file matters less than people think

The reason llms.txt is getting outsized attention is that it feels controllable. It is a single file. Engineering can ship it in an afternoon. It creates the impression of “doing AI SEO” without touching the harder problems.

Those harder problems are still the ones that move visibility:

- writing definitional paragraphs that answer exact user questions

- structuring pages so sections can be lifted into AI responses

- consolidating duplicate content across adjacent keywords

- keeping important pages fast and publicly accessible

- building topical authority strong enough to deserve citation

According to BrightEdge Research in 2025, 57% of search queries now trigger AI Overviews. According to SparkToro and Datos in 2024, the majority of Google searches already end without a click. Those shifts reward sites that can supply direct, extractable answers. llms.txt supports that process only indirectly.

The strongest AI-visible pages are usually not the pages with the fanciest machine instructions. They are the pages with the clearest answer blocks, best information gain, and cleanest crawl paths.

Where llms.txt can help

There are three cases where I think llms.txt is worth implementing right now.

1. Documentation-heavy sites

If your company has product docs, API references, help centers, and long-form educational content spread across multiple subfolders, llms.txt can act like a routing layer. It helps an agent identify canonical sections faster.

2. Sites with strong content but weak discoverability

Some teams already have excellent pages but poor internal linking or fragmented hub structure. A concise llms.txt file can highlight the pages that represent the cleanest answers on the domain.

3. Brands trying to reduce ambiguity

If your site covers multiple adjacent topics, the file can make your positioning clearer. That is particularly helpful when your product category overlaps with broader industry terms.

What to prioritize before you publish one

A lot of teams are implementing llms.txt in the wrong order. The better sequence is:

| Priority | What to fix first | Why it matters for AI visibility |

|---|---|---|

| 1 | Clear H1, H2s, and definition paragraphs | AI systems need extractable answer units before they need a site map |

| 2 | Internal linking to canonical pages | Helps crawlers and retrieval systems identify which page should represent a topic |

| 3 | FAQ and HowTo schema where relevant | Adds machine-readable structure directly to the page most likely to be cited |

| 4 | Page speed and indexability checks | Slow or blocked pages often fail before retrieval even happens |

| 5 | llms.txt implementation | Useful as an assistive layer once the content foundation is already solid |

That sequence is less exciting than “ship one file and win AI search,” but it is much closer to reality.

A practical implementation framework

If you are going to publish llms.txt, keep it focused.

- identify 10 to 25 canonical pages

- group them by topic or use case

- write one sentence on what each cluster covers

- avoid stuffing every page on the domain into the file

- refresh it when your site architecture changes

I would also pair the file with a content audit. Check whether the pages you are highlighting actually contain direct definitions, up-to-date data, and section-level clarity. If they do not, you are just pointing AI systems toward average content faster.

For brands that want stronger AI retrieval performance, the more important move is usually building a dedicated answer layer into the site. That means glossary pages, FAQ hubs, comparison pages, and tightly structured tactical guides. We use this approach at Cogni because it creates reusable answer blocks that both humans and AI systems can interpret cleanly.

The strategic takeaway

llms.txt is worth testing, but not worshipping.

The strongest teams will treat it the same way mature SEO teams treat sitemaps, canonical tags, and schema: necessary support infrastructure, not the strategy itself. Infrastructure helps good content travel. It does not make bad content competitive.

If your site already has a strong information architecture, llms.txt can reduce ambiguity and improve discovery efficiency. If your content is thin, duplicative, or structurally weak, the better investment is fixing that first.

In AI search, the winning pattern is still boring in the best possible way: better answers, clearer structure, stronger evidence, faster pages.

Does llms.txt improve AI citations?

It can help AI agents discover canonical content more efficiently, but it does not directly guarantee citations. Pages still need strong structure, relevance, and evidence to be selected.

Is llms.txt the same as robots.txt?

No. robots.txt controls crawler access rules, while llms.txt is intended to guide AI agents toward important content and context. One is about permission, the other is about orientation.

Should every site publish llms.txt?

Not necessarily. It is most useful for content-rich sites with docs, help centers, or multiple topical clusters. Small sites with weak content foundations will not get much benefit from it alone.

What matters more than llms.txt for AI search?

Clear definition paragraphs, strong H2 structure, FAQ sections, internal linking, and fast crawlable pages matter more. Those changes improve extractability at the page level, where AI systems actually pull answers from.

Can llms.txt replace schema markup?

No. Schema gives machine-readable structure directly on the page, which is more actionable for search and AI extraction. llms.txt is a supplemental discovery layer, not a replacement.

Conclusion

The right question is not “Should we add llms.txt?” It is “Have we already done the work that makes llms.txt useful?”

Teams that treat AI visibility like a systems problem will win more than teams looking for one-file shortcuts. Publish the file if it helps your architecture. Just do not confuse orientation with authority.

AI search does not reward the site with the newest file, it rewards the site with the clearest answer. Explore how Cogni helps teams build that answer layer at meetcogni.com.